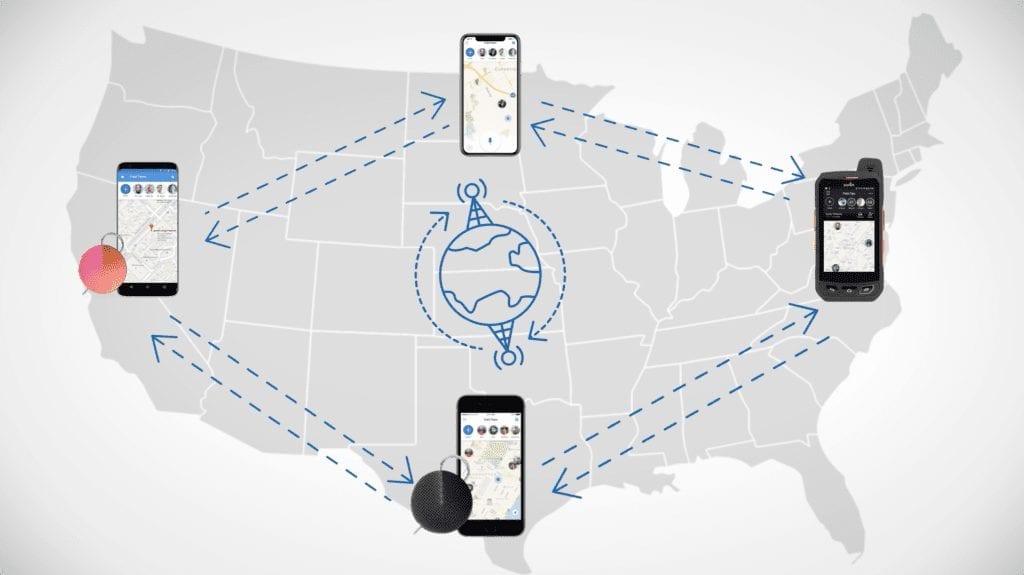

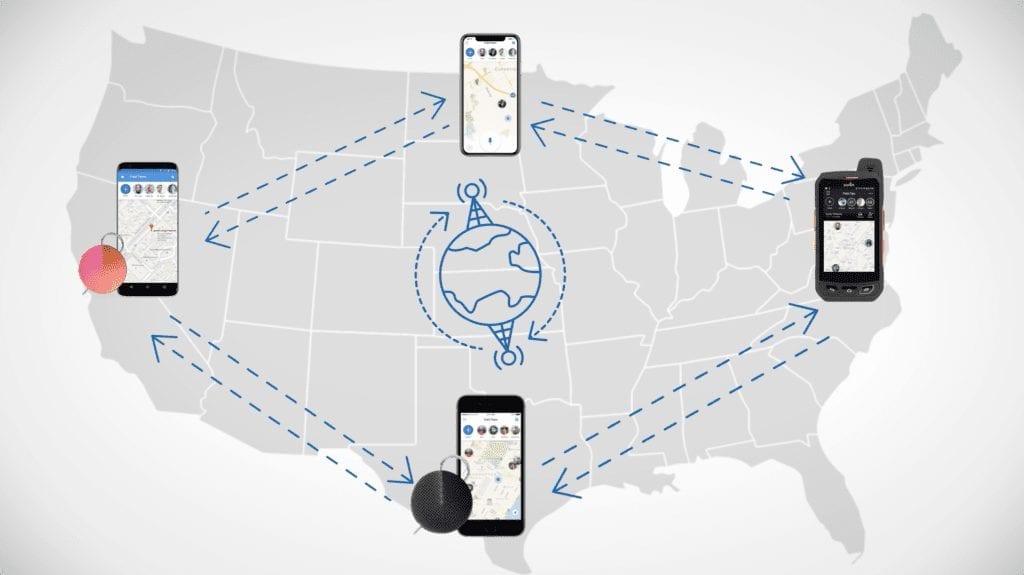

At Orion, we’re always looking to help teams work faster and better. The Orion voice platform keeps teams connected across the globe, and we build our technology with that goal in mind.

One way we’re trying to get our customers moving faster is through edge caching. Edge caching is the process by which our caching service, Fastly, stores Orion API results and serves them quickly. It allows us to store data at the “edge” of our network. There, it’s closer to an end user, which reduces latency.

Edge Caching and Edge Computing

In the bigger picture, edge caching is a form of edge computing, a distributed computing paradigm that allows computation to happen geographically closer to its end users or clients. Edge computing offers massive performance advantages to a variety of technologies, such as artificial intelligence, connected cars, and video. And optimizing for peak performance is a constant challenge. For example, during this year’s FIFA World Cup, Akamai delivered streaming video of the event at a staggering 22.52Tbps, making it their most-streamed event to date.

Why Orion Uses Edge Caching

With edge caching, users don’t actually need their app to contact Orion directly for every piece of information. This means that we can fulfill user requests up to 40x faster than with calls to servers alone. Furthermore, our average time to the first byte on a cache hit takes less than 10 milliseconds.

Not only does this mean faster load times for users, but edge caching also reduces the load on the Orion API. That way, we can pass on much of our load to Fastly, making it easier to scale our service.

In this post, we’ll share some of the technical details about how we’re using edge caching here at Orion. If you’ve worked on dev ops or cloud infrastructure projects or done front- or back-end engineering, you might be familiar with some of the concepts involved. Read on to learn more!

How Orion Caches API Resources

To configure the cache time-to-live (TTL), or the number of seconds until the cache is automatically purged, we use response headers.

Fastly allows you to set the cache TTL using two headers: Surrogate-Control and Cache-Control. Fastly respects the Surrogate-Control: max-age=$seconds header but strips it before the response continues on to other downstream caches or the client.

Cache-Control is a bit more complex. Fastly respects Cache-Control too, but it also gets passed along, potentially setting downstream caches, all the way to the clients. We can’t force a revalidation for all possible downstream caches, so we decided to forego this header in favor of another solution.

Because client-side caching can dramatically improve user experience, especially in cases where the user’s location is far away from the caching provider’s PoP. In order to facilitate client-side caching while ensuring that browsers don’t serve stale data to our users, we’ve settled on ETags. (For more on ETags, check out Mozilla’s excellent guide.)

Each client can then use its own caching policy for ETags, but each should check with the edge cache to see if the data has changed before serving its locally cached value.

With these principles in mind, we’ve chosen the following set of headers for cache control:

Surrogate-Control: max-age=$TTL ETag: W/"$etag_value"

Handling Per-Session Variation in API Return Values

There are some potential wrinkles with this approach. One is that the objects returned by the API can — and do — differ based on the permissions level of the user making the request. If we simply cache the result of the URL, we could end up exposing data to the wrong users or limiting what more highly permissioned users are able to view.

In order to solve this problem, we make use of the Vary header. Fastly has written an excellent blog post on the subject, but in a nutshell, the Vary header allows us to cache different responses based on a specified set of request headers.

Dynamically Setting Cache TTLs

The Vary header allows us to cache up to 200 distinct variations. If we’re varying by session, we’ll reach this number quickly. Moreover, how quickly different resources reach this limit will differ greatly.

The other part of the solution we hit upon is using LaunchDarkly to set the cache TTL dynamically. This gives us tremendous flexibility to tune our caching instantly to provide the best possible user experience.

An example to illustrate this: Suppose we’ve decided that 14 days (or 1,209,600 seconds), in general, is a good cache TTL. However, we also have a few very large organizations that end up hitting the 200 variation limit quickly.

In LaunchDarkly, we can set the TTL to be lower for these very large organizations. Furthermore, we can do this almost instantly, without having to go through the process of deploying new code to our API server or tinkering with our VCL settings.

Looking Ahead

We’re always looking for ways to improve our service, and we’re considering further improvements to our edge caching. We’ll keep you updated.

In the meantime, if you want to learn more about what we’re working on at Orion, explore our open roles.